RBC | Last week I gave a quick overview of ChatGPT and what it can do. In this article I will explain its underlying computational circuitry and how it is trained. I will hack through some dense thickets, try to explain with familiar analogies. The details can be daunting. I think they help you understand what’s going on, but feel free to skim over the tech jargon to the payoffs at the end. A complete technical report on ChatGPT-4.0 is available online (OpenAI, 2023). You won’t need all the particulars to understand articles yet to come in this series.

The computer circuits underlying ChatGPT (and AI in general) are called deep neural networks. The goal of the neural network is to find the best fit of the network’s output to a prescribed target. The training process is like learning in general. Trying to improve your volleyball serve? Start with a practice serve. Target the far corner. How close did you come? Coach says turn a bit further sideways. Serve again. How close was that one? Coach says loft the ball a little higher in the air. Serve again. Over and over and over. Tweak your form as necessary. Develop muscle memory. Ball lands closer and closer to the corner – but with some variation from one serve to the next, of course. Neural networks follow the same rubric but adjust nodes and wires instead of nerves and muscles. The neural network runs millions of repetitions with tweaks in between, getting closer to the target. For ChatGPT, the target is the most probable next word that follows a given sequence of words.

Computer scientists devised the first neural nets 50 years ago to mimic brain function. The brain is an array of nerve cells, neurons, connected by biological wires, axons. Those early attempts at AI fizzled from lack of adequate computational power. But neural networks enjoyed a resurgence in the early 2000s as computers became fast enough to exploit their capabilities.

Here is the architecture. Picture a string of LED lights, eight rows and each row with one hundred LED’s. Call each light a “node.” (The actual structure of ChatGPT-4.0 is proprietary, but that’s all the nodes we’ll need to model it.) Run a wire from each node in the top row to each of the 100 nodes in the row below. Repeat, connecting row two to row three, three to four, and on down. Total connectivity is on the order of 100 trillion (a one followed by 14 zeros). That is, a node (light) on the top row can connect to a node on the bottom row via any of 100 trillion possible paths. Add another row of nodes and you increase the connectivity by another factor of 100, the next generation GPT.

I’ve simplified the architecture. You can (neural network developers do) vary the connectivity and the weighting algorithms in different rows (see Dugas, 2023), but an array captures the essence.

Now imagine each node holds either a one or a zero, i.e. each light is either on or off. Imagine each wire has a “weighted gate,” e.g. transistor. Gates with large weights are open wider, easier to send a signal through the wire. The weights are adjusted by the machine itself, following its training rules. Each node in a row adds up all the signals coming through the wires from the row above. If the sum of all those signals exceeds a certain threshold, the node flips its state from one to zero or vice versa.

Pretty simple, actually.

Now train the network. Feed it large volumes of text. Nowadays that includes huge chunks from all the text available in every document and every chat on the World Wide Web. Tell it to find patterns in the sequences of words. We want GPT to figure out what’s the next likeliest word in a sequence of words and symbols. If it can do that it can generate compelling new text on its own. After it is trained, for example, if you ask it to “describe the historical significance of the Declaration of Independence,” it may start responding with something like “The Declaration of Independence was written by Thomas Jefferson in the summer of 1776.” It begins with “The Declaration of Independence” (because it sees that string of words in the query) “was written by Thomas Jefferson” (because it has seen that sequence of words associated with “Declaration of Independence” a zillion times in thousands of historical documents on the Web) “in the summer of 1776” (ditto – words following “Declaration” and “Jefferson” in thousands of texts), and so on. I’ve given the example in likely phrases, but GPT learns the next most probable word after word after word as it studies the patterns of words on the Web.

An aside: Being a computer, the neural net doesn’t really read words like you and I do. It looks for patterns in the sequences of 1’s and 0’s by which computers calculate and store memory. Letters and symbols that we see on a monitor are processed as sequences of 1’s and 0’s in the computer. The neural network reads short strings of 1’s and 0’s as “tokens.” We’ll stick to using “words” in our discussion. Neural nets recognize patterns of tokens, but that’s the same notion as recognizing patterns of words.

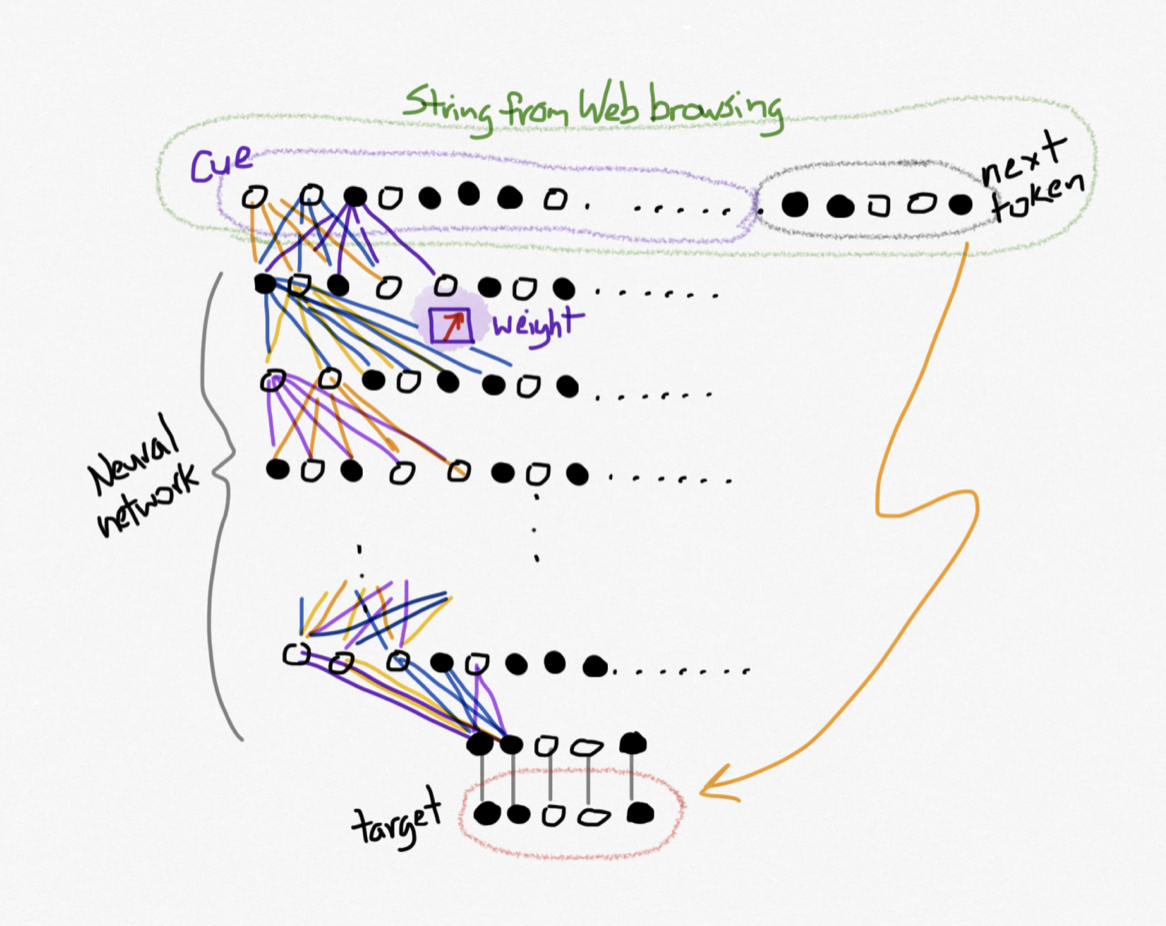

Back to training the GPT: The training algorithm runs out onto the Web and looks for a string of tokens, labeled the “cue” in Figure 1. Initially it just grabs a string of tokens it ran across by chance. It returns with that cue string plus the next token it found in that sequence on the Web. That next-token becomes the target for the neural network.

To initialize processing, the neural network randomizes the weights in all the wires and enters the cue string into the top row of nodes. A pattern of percolates through the net, generated by those initial random weights. Imagine lights blinking on and off through the array. The last row is compared to the target. The computer measures how far off each node in the bottom row is from the target. Information from that measurement percolates backward along the wires, back up the neural net, and tweaks the weights along the way. The process repeats many millions of times – forward cascade of through the net, comparison to target, back-propagation to adjust the weights. The process repeats until the last row of nodes matches the target. Then the search algorithm jumps back out on the Web to find a new cue and target. It resumes processing, but with the weights unchanged from the previous round. Repeat. Grab the next block of text. Process. Adjust the weights. Repeat. Until you’ve retrieved all the text from the Web or until the GPT performs to standards.

That’s the key. It’s the weights doing the learning. It’s the weights storing memory from one session to the next. With the huge connectivity available in trillions of paths, trillions of weight sequences, the neural net can store vast knowledge about grammar and syntax – and, it turns out, enable the GPT to do much more than find next words.

To those mathematicians among you, the neural network encodes a matrix. Feed that matrix a vector (in this case a sequence of tokens, the cue) and matrix multiplication calculates the next word in the sequence.

Take note: the weights in the wiring of the neural network determine the values in the nodes, and the neural network itself calculates those weights. No human supervision necessary. The machine writes its own code. And the humans watching the machine don’t necessarily understand what the machine is doing. It’s a black box. We can see the output – the next likely word in a sequence – but we don’t know how the neural net figured it out. In that regard, it’s like the human brain. We can observe the input, the trajectory of a baseball into left field, say. We can observe the output – run to position where we can catch the ball. But we can’t see what’s going on in the brain – neurons (nodes) connected by weighted axons (wires) – that figures out how to get to the right spot at the right time.

The dedicated chess-playing neural networks (e.g. Deep Blue) and Go neural networks (e.g. AlphaGo) are notorious for this. They routinely defeat the world’s best human players using strategies the humans never encountered before, strategies the humans never thought of. Grand masters these days use the machines as tutors to sharpen their own skills.

And there’s the worry. The dedicated neural nets, i.e. nets specialized to perform a particular task like play chess, are better than the humans. That’s emergence. New behaviors that emerge from the neural nets. Behaviors that were not programmed into them. Behaviors that were not anticipated. Behaviors that exceed human capacities.

GPT-4 exhibits more general emergent behaviors, beyond just determining the best series of moves in chess. GPT-4 and its cousins may be able to out-think us in any number of realms, not just board games. The argument goes like this: language is the visible representation of our thought processes. Syntax and grammar capture the elements of thought. How we reason and solve problems can be expressed using those rules of language. It’s possible that whatever goes on inside our brains might be mirrored in the language. So if you can capture the rules of language in a neural network, you can capture the capacity for reason and problem solving . . . and consciousness? There be dragons.

There are excellent courses available online if you would like to learn more about neural networks. See, for example, Andrew Ng’s course on Coursera (Ng, 2023). Next week we’ll consider the benefits and risks of GPT as we understand them now. Later we’ll return the possibility that these machines might create a world that no longer needs us humans.

References:

Dugas, 2023. GPT architecture on a napkin. https://dugas.ch/artificial_curiosity/GPT_architecture.html#:~:text=Having%20passed%20through%20all%2096,a%202048%20x%2012288%20matrix.

Ng, Andrew. 2023. AI for everyone. https://www.coursera.org/learn/ai-for-everyone

OpenAI. 2023. GPT-4 technical report. https://arxiv.org/pdf/2303.08774.pdf

By BOB DORSETT | Special to the Herald Times